Core Question: As agentic AI sends inference demand surging beyond what any near-term supply buildout can match, what becomes the scarce resource — and who captures the value from managing it intelligently?

Position: The premium has shifted from who can build the most raw capacity to who can orchestrate every available electron until large-scale supply comes online. Three Q1 2026 announcements make this visible at production scale.

Methodology: AI-assisted evidence infrastructure · Human-directed thesis · Primary-source verified

Executive Summary

Q1 2026 is the kind of start to a year that shreds New Year’s resolutions into best laid plans confetti. While chaos rules the day across the fast-evolving energy transition-AI infrastructure-geopolitical landscape, one certainty emerges in 2026: we have entered the agentic power demand inflection point.

This is something new. Consensus holds two positions on AI power demand. First, that the supply problem — the interconnection queue, the permitting backlog, the grid bottleneck — will be resolved by policy reform and growing investment into increasingly exotic, but perpetually five years away from commercialization generation (e.g., Small Modular Reactors). Second, that as inference costs fall, total power demand moderates. Both positions are structurally wrong, and the error compounds.

Inference — aka the lifeblood and heartbeat of agentic AI — now accounts for 80% of total AI energy footprint (Deloitte, 2026). The agentic era will multiply that structurally, and not gradually. A standard chatbot query triggers one inference call; an AI agent completing an enterprise workflow triggers 5-10, adding planning loops, tool selection, and verification at every step; a multi-agent system running around the clock triggers a persistent chain with no natural endpoint. IDC projects 1,000x inference demand growth by 2027. Cheaper inference does not moderate this demand — it accelerates it. The Jevons mechanism is operating as advertised, and Nvidia's hardware roadmap encodes it explicitly.

Meanwhile, three Q1 2026 announcements mark the production-scale arrival of the only viable near-term response: intelligent orchestration of installed capacity. Nvidia and Emerald AI's Conductor platform, CPower and Bentaus's live California grid demonstration, and Octopus/Kraken's acquisition of Uplight are not isolated product launches. They are the first components of an intelligence layer connecting two grids that the physical network cannot connect on any near-term timeline.

The grid-edge orchestration software play is already well-established and validated, but now it is beginning to move at serious scale. Capital is still mostly chasing training-era infrastructure bets. Meanwhile, the agentic-era orchestration play remains wide open.

This thesis breaks if AI inference workloads prove too sensitive for demand response at production scale — if operators discover that real-world flexibility degrades service-level agreement (SLA) performance in ways the controlled CPower/Bentaus demonstration have not yet surfaced.

Context

In the previous letter, A Tale of Two Grids, I documented the bifurcation of the traditional grid into two regimes. The 2,600 GW interconnection queue is "the electrification equivalent of Hormuz." The highest-value load defected around it, building captive behind-the-meter (BTM) gas infrastructure.

The result — two grids evolving in parallel:

Grid 1: the regulated clean grid decarbonizing at the speed of government

Grid 2: the captive AI grid burning gas today and contractually committed to cleaning it up by 2030-2035

Five days after Two Grids was published, three announcements began rewriting it and creating a second-order story.

An emerging consensus on AI power demand runs a familiar script: inference costs dropped roughly 92% since early 2023; as they fall further, total power demand moderates; grid pressure concentrates in training, and as the industry shifts toward inference, the strain eases. This argument makes the same error that energy analysts made when horizontal drilling cut natural gas production costs 60% in the shale era, that semiconductor analysts made as transistor density doubled on schedule for two decades, and that solar analysts made as module costs fell 89% over a decade. In every case, cheaper production did not reduce consumption. It expanded the number of applications that consumption could support. The result was more demand, not less.

The emergence of the agentic era in 2026 amplifies this mechanism structurally. A standard chatbot runs one inference call per query. An AI agent completing an enterprise task runs 5-10. Nvidia's Vera Rubin architecture targets 15x token generation and 10x lower cost per token versus Blackwell. That is not a projection of moderated demand. That is Nvidia embedding the Jevons assumption directly into its product roadmap — every efficiency gain unlocks a proportionally larger increase in consumption.

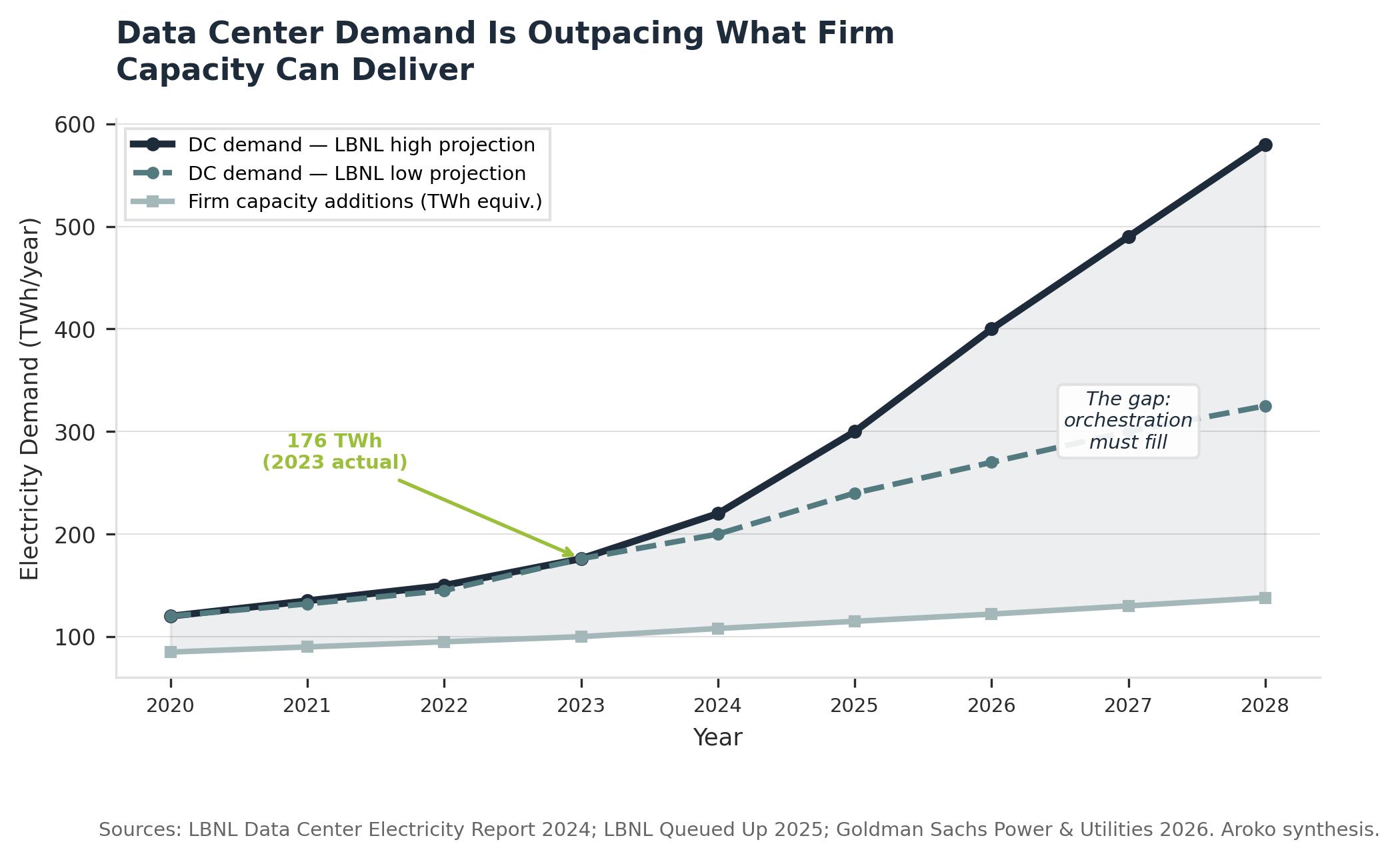

IDC puts a number on it: 1,000x inference demand growth by 2027. Goldman Sachs projects 165% growth in data center power demand by 2030 versus the 2023 baseline. These are not fringe estimates. They are the working assumptions embedded in every major AI infrastructure capex decision being made right now. The power demand curve does not flatten in the agentic era. It accelerates.

From this reality, one conclusion emerges: the energy sector's decade-long structural shift from linear asset building to network orchestration — from who can build the most to who can manage distributed assets most intelligently — has just collided with the most power-hungry infrastructure deployment in history. The Q1 2026 announcements are that collision made visible at production scale.

Mechanism & Evidence

The gap, in hard numbers

The U.S. interconnection queue holds 2,600 GW of projects. Annual capacity actually built: roughly 50 GW in 2024 — 40 GW solar, 10 GW battery storage. The queue adds approximately 350-400 GW of new applications per year. For every project that gets built, seven to eight more enter. At current rates, clearing the existing backlog would take 52 years.

Meanwhile, as outlined earlier, the demand side is accelerating. Bullish estimates are comparable to adding the entire annual electricity consumption of Texas to data center loads in five years.

The critical constraint is not total generation — it is firm, continuous power. Solar's capacity factor is 25%. Wind's is 35-45%. The new capacity clearing the queue is almost entirely intermittent. Data centers require 99.999% uptime. The supply being built is not the supply AI infrastructure can use.

The agentic demand acceleration

Inference already accounts for 80% of total AI energy footprint, up from one-third in 2023. The shift from training-dominated to inference-dominated AI compute has been fast and structural. The agentic era accelerates it further.

A January 2026 paper in ArXiv explains as follows:

As the unit price of intelligence falls, downstream firms don't simply run the same workloads more cheaply — they endogenously redesign their agent architectures to consume dramatically more compute. Falling API prices induce developers to adopt deeper reasoning loops, larger context windows, tool-augmented multi-agent workflows, and chain-of-thought pipelines that multiply token consumption per task.

This is the Jevons mechanism in formal terms. It is also Nvidia's strategic roadmap in plain terms.

Nvidia’s NemoClaw thesis, announced at GTC 2026, is the structural vehicle for this demand proliferation. It is open-source, runs on any chip, and deploys on enterprise servers and local edge hardware — not just hyperscale campuses. The strategic logic is unmistakable: Nvidia is positioning its software layer as the standard orchestration infrastructure for AI agents running on hardware it may not have manufactured. This mirrors the ARM licensing model applied to the AI agent stack. The company's business model has become a direct force multiplier for the Jevons mechanism — every additional inference call on any device, on any hardware, running on NemoClaw's stack is proof of Nvidia's bet.

The orchestration response

Three announcements, within four days of each other, mark the production-scale response.

On March 23, Nvidia and Emerald AI launched Conductor alongside AES, Constellation, Invenergy, NextEra Energy, Nscale, and Vistra. To be clear, these are serious utility players. Conductor orchestrates AI compute flexibility alongside on-site generation, batteries, and BTM resources to deliver grid-responsive power management while maintaining AI workload quality commitments. The announced target: 100 GW of flexible AI capacity. Why is this significant? This is a production architecture co-signed by the companies that will deploy it — not a research pilot.

The proof of concept arrived a month earlier. In February, CPower, Bentaus, and Supermicro ran a live demonstration on California's grid: a cluster of servers running Nvidia B200 GPUs cut power draw by 75% in under 20 milliseconds, responding to real-time dispatch signals while maintaining AI SLAs. That response speed matches a financial market clearing compared. Meanwhile, roughly two-thirds of operational power plant capacity in the U.S. today takes more than 1 hour to fully ramp.

On March 24, Octopus Energy's Kraken acquired a majority stake in Uplight, which manages 8.5 GW of controllable flexible load across 85 U.S. utilities — 8 of the 10 largest. Uplight already orchestrates thermostats, EV chargers, and commercial HVAC systems. Kraken's integration adds AI compute loads to that dispatch stack.

The industrial cogeneration parallel is exact. From the 1970s through the 1990s, large industrial manufacturers — paper mills, chemical plants, refineries — built on-site cogeneration when utility power proved too unreliable for continuous process loads. They defected. Then the Public Utility Regulatory Policies Act of 1978 (PURPA) required utilities to purchase surplus power from qualifying cogeneration facilities at avoided cost. The factories re-entered the grid as suppliers, not customers. Revenue from grid participation reshaped the economics of the original cogeneration decision retroactively. The Q1 2026 announcements are the AI-era PURPA: the commercial framework that wires Grid 2 back into Grid 1 as a dispatchable asset. Industrial cogenerators sold surplus kilowatts. AI data centers sell flexible demand — the ability to shed hundreds of megawatts in milliseconds — and if NemoClaw's distributed compute vision materializes, so does every enterprise server room running an AI agent stack.

The Macro-Tech-Geo Matrix

NOW (2026) | 2-3 YEARS (2027-2028) | 5+ YEARS (2030+) | |

|---|---|---|---|

MACRO | $600B+ hyperscaler capex, $450B AI-tied. 96 GW global data center power demand, 90% AI-driven. BTM gas buildout accelerating — 25 GW projected over five years. Gas midstream and equipment roll-up plays from Issue #2 active. | IDC's 1,000x inference demand growth materializes. Grid-edge software M&A accelerates as consolidation premium becomes visible. Equipment constraints persist: GE Vernova 80 GW backlog, 128-week transformer lead times. One Big Beautiful Bill Act (OBBBA) deployment cliff creates distressed-acquisition vintage. | Two-tier AI infrastructure cost structure: orchestration-integrated operations run flexible power at lower cost; static campuses absorb scarcity premiums. Grid-edge software margin capture compounds annually. |

TECH | Inference = 80% of AI energy footprint. Blackwell cuts cost per token 10-15x — Jevons mechanism activates. NemoClaw launches open-source agentic stack on any chip. Conductor bridges Grid 2 to Grid 1 at production scale. CPower/Bentaus 75% demand response proof of concept validated. | Vera Rubin (H2 2026) cuts token cost another 10x — consumption response amplifies. NemoClaw scales to enterprise edge; inference proliferates beyond campuses. Distributed AI compute demands grid orchestration at a scale Kraken/Uplight was not originally sized to handle. | Distributed agentic compute nodes are the dominant AI infrastructure form. Grid orchestration software is the margin-capture layer. First commercial small modular reactors (SMRs) deploy. Carbon capture and storage (CCS) at hyperscaler sites proven or abandoned. |

GEO | FERC tightens co-location rules. PUC counter-attack maturing: Virginia, Texas, Georgia, Ohio frameworks active. Hormuz closure strengthened the sovereign energy frame from Issue #2. China gallium control architecture intact — suspended, not dismantled. | PUC exit fee frameworks crystallize as standard deal structure for BTM loads above 25 MW. Data localization mandates in EU and Asia expand distributed compute footprint, reinforcing NemoClaw's distributed architecture thesis. FERC demand response classification for AI compute becomes contested policy question. | Regulatory compact between BTM operators and grid regulators matures. Sovereign AI compute plus sovereign power becomes geopolitical organizing frame. China's manufacturing dominance in renewables and batteries creates strategic dependency risk for Western AI infrastructure buildout. |

Capital Allocation Playbook

Two Grids identified three capital plays. All three remain structurally valid — one has sharpened, one demands emphasis it did not receive.

Play 1 — Gas midstream tollbridge (Core; carry forward)

Hyperscalers are building captive gas infrastructure regardless of what software layer runs above it. Williams Companies, GE Vernova, VoltaGrid, Entergy — the molecule tollbridge is under active construction. BTM gas assets with Conductor integration carry materially higher long-term value than pure captive-generation plays. A BTM gas plant participating in demand response markets earns revenue from the grid it partially exited — that changes the return profile. Underwrite for state utility commission friction as deal structure certainty: Virginia's 85% minimum billing requirement, Texas SB6, Georgia's 100 MW approval threshold, Ohio's AEP minimum charge structure are certainties, not contingencies. Hinshaw pipeline exemption laterals remain the fastest-to-market structure.

Play 2 — Electrical equipment roll-up (Buyout; carry forward, narrowing window)

GE Vernova's backlog stands at 80 GW against roughly 20 GW annual output, sold through 2029. Power transformer lead times: 128 weeks with an estimated 30% U.S. shortfall. The acquisition playbook from oilfield services during the shale boom applies directly. The window is 12-24 months — OEM capacity additions will eventually normalize lead times, and when they do, the window closes.

Play 3 — Captive decarbonization (Venture; carry forward, updated return profile)

Carbon capture and storage (CCS), small modular reactors (SMRs), and enhanced geothermal systems (EGS) remain the technology vectors. Google's 400 MW Broadwing CCS plant and Amazon's $500M X-Energy SMR program are the anchor proof-of-concept investments. Demand response revenue from flexible compute is a fourth decarbonization pathway Issue #2 did not model. A data center earning grid services revenue while running AI workloads begins decarbonizing its economics before CCS or SMR technology catches up. This lengthens the runway to technology maturity while improving the return profile on the venture bets.

Play 4 — Grid-edge orchestration software (Double down; established thesis, not yet captured)

This is not a new thesis. The shift from linear asset builders to network orchestrators — value migrating downstream to whoever manages distributed assets most intelligently — has been the structural direction of the energy sector for a decade. More than $1 trillion in value was projected to shift to grid-edge orchestration platforms by 2030. Capital has been slow to recognize it. The Q1 2026 signals are the catalyst that makes it undeniable.

Kraken/Uplight at 8.5 GW across 85 utilities sets the template. As NemoClaw pushes AI inference to the enterprise edge, the addressable orchestration market expands from hyperscale campuses to every enterprise server running an AI agent stack. The consolidation premium for platforms with utility relationships plus AI compute orchestration capability has not been captured. Double down.

Where capital belongs:

Concentrate here: Grid-edge orchestration software; BTM infrastructure with grid participation optionality built in.

Active but maturing: Gas midstream tollbridge assets; electrical equipment manufacturers with hyperscaler contracts — the window is open but attention is growing.

Reduce: Legacy utilities with high AI load concentration modeled as captive, long-duration revenue. The prosumer architecture puts that revenue model under increasing strain.

The frame that misses the moment: The industry is mid-shift from training-era AI — centralized clusters, intermittent workloads, massive static point loads — to agentic-era AI: distributed inference, continuous operation, edge deployment at scale. Capital that extrapolates the training-era architecture into the next decade is not just missing the moment at the margin. It is structurally misaligned with the infrastructure being built right now. Who can deliver 500 MW to a campus is still an important question in 2026, but who can orchestrate millions of inference calls per second across distributed hardware, in real time, against a grid already at capacity is where the focus shifts.

Operator Implications

Three immediate pressure points demand operator attention.

First — Power is a strategic asset. Treat it like one.

Your AI compute footprint is about to multiply. Not because you planned for it, but because the agentic era makes it structurally inevitable. Every workflow you automate, every agent you deploy, every sub-agent that spawns adds 5-10 inference calls where one existed before. Operators treating power as a facilities decision today will find themselves competing for constrained capacity at scarcity premiums within 24-36 months. The companies locking in demand response agreements and grid participation architecture now are not being conservative. They are securing optionality that will be unavailable at any price when the agentic demand surge fully arrives.

Second — Your AI cost model is built for the chatbot era.

The Jevons mechanism is already in your P&L, and it will accelerate. Cheaper inference per token did not reduce total AI infrastructure spend — it expanded the number of things you could build on inference. The agentic era multiplies that pattern by an order of magnitude. CFOs who modeled AI infrastructure costs against current token costs are carrying a structural underestimate. Scenario-plan your compute footprint assuming 10x inference call volume per AI workflow within 18 months. Then determine whether your power access, vendor agreements, and architecture can absorb it.

Third — The orchestration platform decision you make in 2026 is a 5-year architectural commitment.

NemoClaw and Conductor are not neutral infrastructure. They are platform bets in the category of choosing AWS in 2010 or committing to Salesforce in 2012. Operators embedding AI agent workflows in NemoClaw's stack and power management in Conductor's platform are making commitments that will shape infrastructure optionality through 2030. Evaluate them with the scrutiny you apply to core enterprise system decisions, not standard software procurement timelines.

Watchlist

1. CPower/Bentaus production deployment results — The February 2026 controlled demonstration used batch workloads; production real-time inference carries tighter latency tolerances. Results maintaining SLAs at 75% load reduction validate the bridge architecture at commercial scale. Degradation results are the primary falsification signal for this thesis.

2. Kraken-Uplight integration timeline — When does the combined platform begin dispatching AI compute loads alongside distributed energy resources in live ISO markets? Speed of integration determines whether this is a 2026 or 2028 story for the grid-edge software play.

3. NemoClaw/OpenClaw enterprise adoption velocity — Agentic deployment at the enterprises named at GTC 2026: Salesforce, Cisco, Adobe, CrowdStrike. IDC's 1,000x inference demand projection depends on this uptake curve. The faster agent stacks like NemoClaw proliferate, the faster the firm power constraint tightens and the sooner the orchestration premium crystallizes.

4. FERC demand response classification for AI compute — A positive classification — formally designating large AI data centers as demand response resources eligible for capacity market payments — would dramatically accelerate bridge economics by creating a direct revenue line for grid participation.

5. GE Vernova and Siemens Energy backlog — Letter #2's equipment roll-up signal, still active. A backlog increase confirms the acquisition window remains open; stabilization signals OEM capacity additions are catching up and the window is compressing.

Synthesis

Aroko Letter #2 analyzed two grids running parallel: one regulated and decarbonizing slowly, one captive and burning gas. Capital plays are following the defection.

This Letter is about what comes next. Not reunification — the physical network cannot bridge the gap on any near-term timeline. Not permanent separation — the entities that defected are wiring back in as participants, not customers. Something more structurally significant is underway: an intelligence layer is being built between the two grids at exactly the moment the agentic era begins accelerating inference demand in a direction no power supply-side buildout can offset before 2029.

The power supply constraint does not moderate in the agentic era. It proliferates. The entities building the software layer that manages that proliferation intelligently — Conductor, Kraken/Uplight, and the platforms that follow — are building the most valuable infrastructure in the AI-energy nexus right now.

Capital has moved on the training-era infrastructure bets. The agentic-era orchestration play remains wide open. The Q1 2026 announcements are the signal opening the door for capitalizing on inference-related flexible power over the next 12-18 months.

Evidence Base

This letter draws on 18 primary and secondary sources across four reliability tiers. Keystone claims — IDC's 1,000x inference demand projection, Nvidia's 15x token generation figure, the CPower/Bentaus 75% power reduction result, the Kraken/Uplight 8.5 GW figure, LBNL's interconnection queue data, and Goldman Sachs's 165% data center demand growth projection — are verified against primary source documents and assigned Tier A reliability. The Jevons framing is substantiated by a January 2026 ArXiv paper and three independent analyst reports. The 5-10x agent inference call multiplier is sourced from AgentMeter and Agent Wiki cost analysis.

Medium-strength claims include the timeline for full Kraken/Uplight integration and the pace of NemoClaw enterprise adoption — both carry genuine uncertainty and are marked as working assumptions. The FERC demand response classification outcome is speculative and will be re-evaluated upon Q3-Q4 2026 docket activity.

Primary sources: LBNL (Queued Up 2025; Data Center Electricity Report 2024); EIA Annual Energy Outlook 2025; Goldman Sachs Power & Utilities 2026; IDC FutureScape 2026; Nvidia GTC 2026 Keynote; NVIDIA Newsroom; CPower/PRNewswire; Businesswire (Kraken/Uplight). Supporting: Deloitte TMT Predictions 2026; ArXiv Jan 2026; Tom's Hardware; DataCenterHawk; AgentMeter/Gris Labs; Agent Wiki; Morgan Stanley Energy Markets 2026.

About Aroko: Aroko provides strategic advisory and capital allocation intelligence at the intersection of energy transition, technology infrastructure, and geopolitical risk. Our analytical process combines proprietary evidence infrastructure with human-directed thesis formation. Every keystone claim is verified against primary sources, and all editorial judgment and capital allocation framing is conducted by Aroko’s team. The Letter is published biweekly for emerging technology operators and the investors who back them — with implications for both portfolio positioning and operational decision-making.

Disclaimer: This article is for informational purposes only and does not constitute financial, investment, legal, or tax advice. The opinions expressed regarding macro trends and infrastructure investments are solely those of the authors. Past performance does not guarantee future results. Readers should consult with a qualified financial professional before making any investment decisions.